What is Deepchecks?

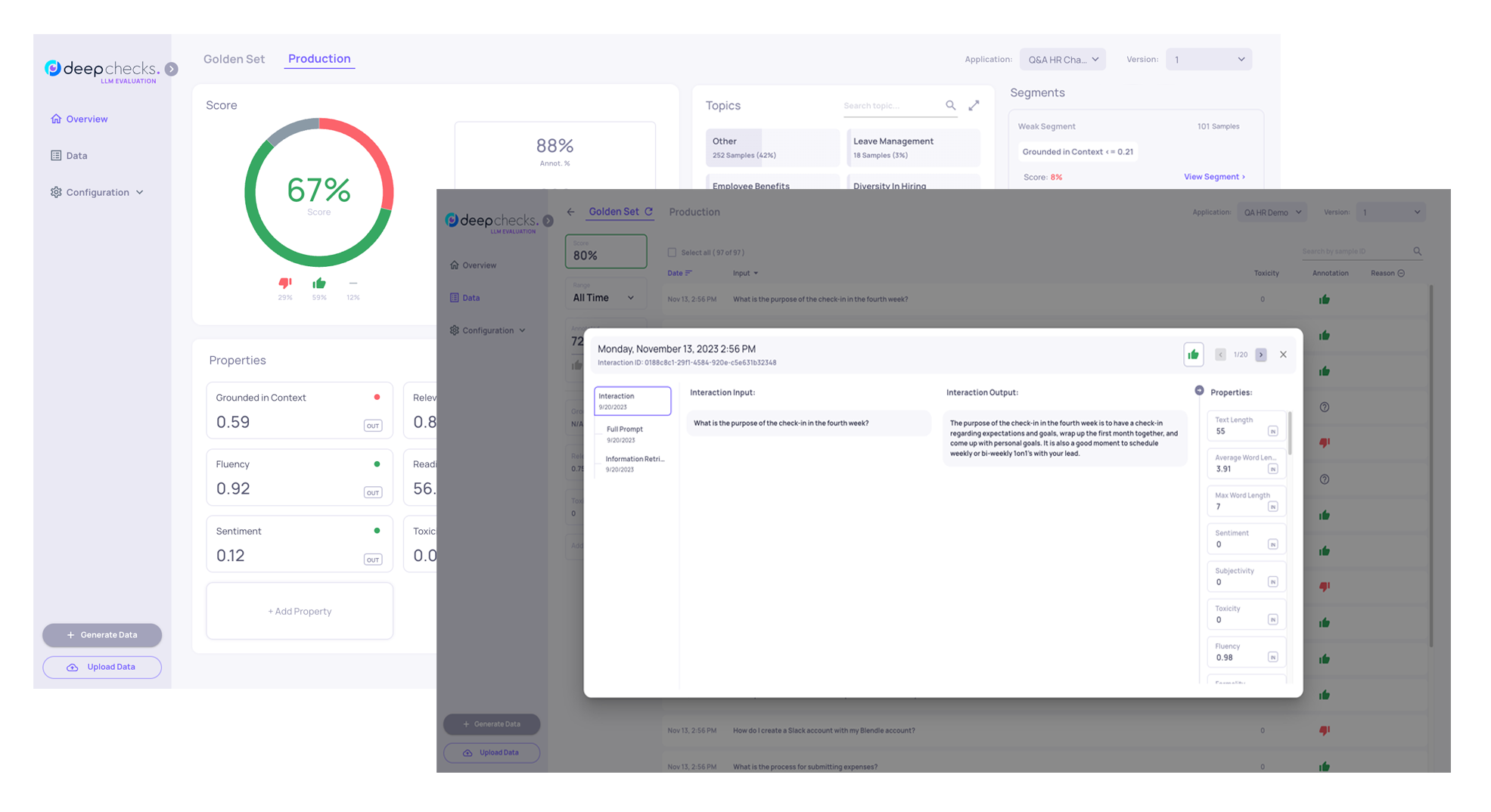

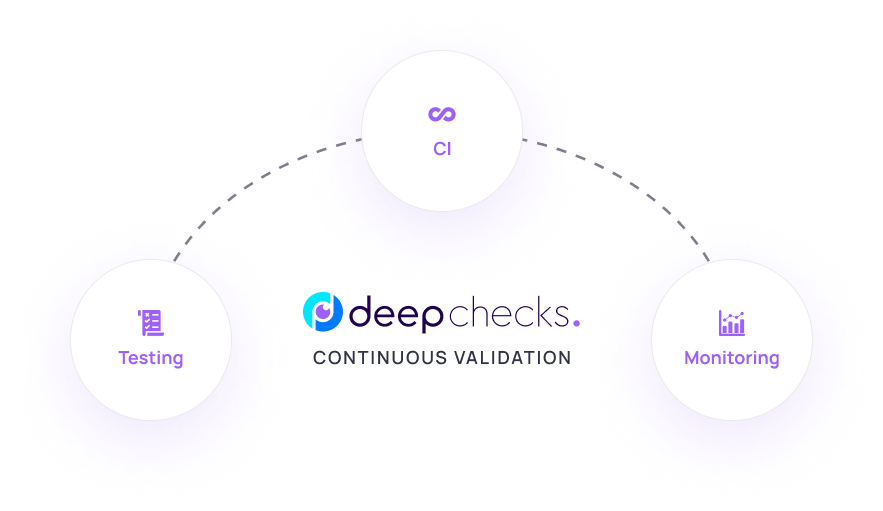

Deepchecks is an evaluation platform for LLM-based and agentic AI applications - helping you understand how your AI system performs, find where it fails, and continuously improve it from development to production.

Deepchecks is a platform for evaluating, testing, and monitoring LLM-based and agentic AI applications. Whether you are building a RAG pipeline, a multi-agent workflow, or any LLM-powered product, it gives you the tools to understand how your AI system actually behaves - not just in a lab, but throughout the full lifecycle from early development to production.

Whether you are iterating on prompts, comparing model versions, monitoring production traffic, or testing an agentic workflow end-to-end, Deepchecks gives you a structured, scalable way to measure quality and catch problems before your users do.

What you can do with Deepchecks

Evaluate quality automatically - Deepchecks calculates properties (quality metrics) on every interaction: hallucination likelihood, answer relevance, instruction following, toxicity, and many more. These run automatically on every data upload, so you always have a quantified view of quality.

Annotate at scale - Rather than manually reviewing hundreds of interactions, Deepchecks automatically estimates whether each interaction is "Good" or "Bad" using configurable rules on top of properties. You can then focus manual review only where it matters.

Compare versions - When you change a prompt, swap a model, or update a retrieval strategy, Deepchecks lets you run the same evaluation set across versions and see exactly what improved and what regressed.

Evaluate AI agents - Deepchecks has first-class support for agentic and multi-agent workflows. It logs and evaluates every span, tool call, and LLM invocation in a trace - giving you component-level quality scores and one-click root cause analysis when something goes wrong.

Monitor production - Connect your production traffic and track score distributions, annotation rates, and property trends over time. Deepchecks integrates with Datadog, New Relic, and AWS CloudWatch for alerting and dashboards.

Find root causes - When a version underperforms, Deepchecks helps you identify which segment, topic, or component is responsible - so you can fix the right thing.

Who is this for?

Deepchecks is used by teams building LLM-powered products - including developers, ML engineers, and product managers who care about quality. It works for:

- RAG and Q&A applications - question answering, document retrieval, knowledge bases

- Agentic and multi-agent systems - LangGraph, CrewAI, Google ADK, and custom agents

- Summarization, classification, generation, and chat - see the full list in Supported Use Cases

- Any LLM pipeline - via the Python SDK, OpenTelemetry integrations, or CSV upload

How to get started

These docs are organized as a journey:

- Get Started (this section) - Try Deepchecks with sample data and learn the core concepts

- Integrate Your Data - Connect your real LLM pipeline to Deepchecks

- Core Features - Understand how evaluation works and use the analysis tools to improve your application

To begin, choose the quickstart that works best for you:

- SDK Quickstart - Install the Python SDK and see your first evaluation results in 5 minutes. Best for developers.

- UI Quickstart - Upload a CSV file and explore the platform without writing any code. Best for anyone who wants to see the product first.

If you already have data and just need to integrate, go directly to Integrate Your Data.

Updated about 2 months ago