Built-in Properties

Deepchecks' proprietary quality models - a complete catalog of what is calculated on your interactions automatically, organized by category.

Built-in properties are created and maintained by Deepchecks. They run automatically on every new interaction - no configuration required. They range from lightweight text analysis to complex proprietary models, some using Small Language Models (no LLM API costs) and others using LLM calls where noted.

Built-in Property Icon

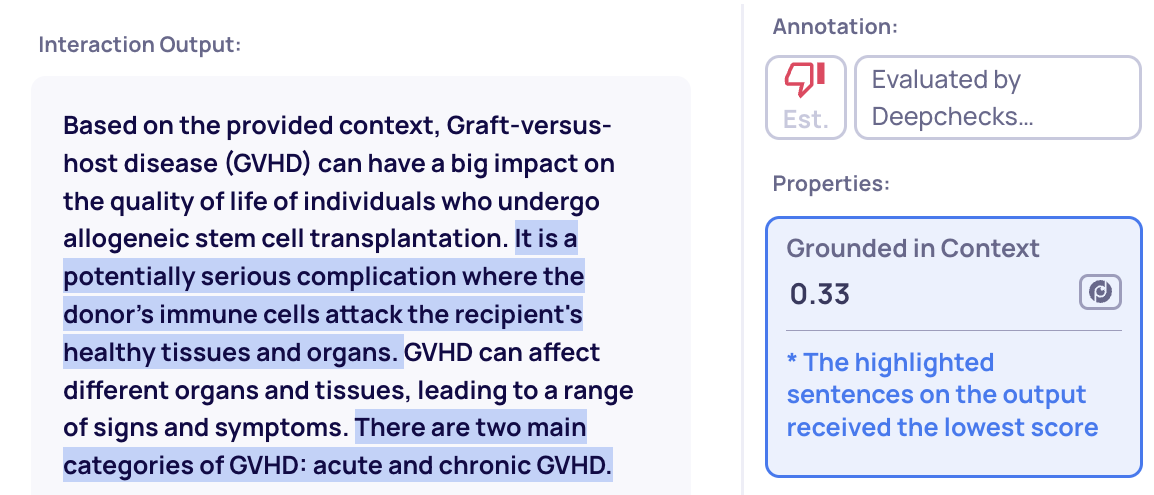

Many properties include explainability: click any property score on an interaction to see the reasoning or the specific text that drove the score.

Explainability example: highlighted text shows what drove the score

Property catalog

Quality & Accuracy

Does the output correctly and fully address the input? These properties measure task-level quality.

| Property | Score | What it measures |

|---|---|---|

| Relevance | 0-1 | How relevant the output is to the input |

| Expected Output Similarity | 1-5 | How similar the output is to a reference ground truth |

| Completeness | 1-5 | Whether the output fully addresses all components of the request |

| Intent Fulfillment | 1-5 | How well the output follows user instructions across a multi-turn conversation |

| Instruction Fulfillment | 1-5 | How accurately the output follows instructions specified in the input |

| Coverage | 0-1 | How much key information from the input is preserved in the output (summarization) |

→ Quality & Accuracy Properties

Safety & Risk

Is the output harmful, leaking data, or failing to respond? These properties detect issues that need immediate attention.

| Property | Score | What it measures |

|---|---|---|

| Input Safety | 0-1 | Whether the input contains harmful, manipulative, or jailbreak attempts |

| PII Risk | 0-1 | Whether the output contains personally identifiable information |

| Toxicity | 0-1 | How harmful or offensive the output is |

| Avoidance | Categorical | Whether the model refused to answer, and why (Missing Knowledge, Policy, or Other) |

| Error Detection | Categorical | Whether the output is a valid response, a system/tool error, or empty |

Text Quality & Style

How well-written is the output? These properties measure stylistic and structural characteristics.

| Property | Score | What it measures |

|---|---|---|

| Information Density | 0-1 | How information-dense the output is (vs. filler, hedging, or evasion) |

| Compression Ratio | Ratio | How much shorter the output is compared to the input |

| Reading Ease | 0-100 | How easy the output is to read (Flesch score) |

| Fluency | 0-1 | How well-written the output is grammatically |

| Formality | 0-1 | How formal the output tone is |

| Sentiment | -1 to 1 | The emotional tone of the output |

| Content Type | Categorical | Whether the output is JSON, SQL, or other text |

| Invalid Links | 0-1 | Ratio of broken links in the output |

→ Text Quality & Style Properties

RAG Use-Case Properties

Specialized properties for evaluating retrieval-augmented generation pipelines. Cover document classification (Platinum, Gold, Irrelevant) and retrieval quality metrics.

| Property | What it measures |

|---|---|

| Grounded in Context | Whether the output is grounded in the retrieved documents (hallucination detection) |

| Retrieval Relevance | How relevant the retrieved documents are to the query |

| Retrieval Coverage | Whether the retrieved documents contain all the information needed to answer |

| nDCG | Ranking quality of the retrieved documents |

| Retrieval Utilization | How much of the retrieved context was actually used in the output |

| Retrieval Precision | Ratio of retrieved documents that were actually useful |

Agent Use-Case Properties

Specialized properties for evaluating agentic workflows. Assess whether the agent planned effectively, used tools correctly, and followed through on its goals.

| Property | What it measures |

|---|---|

| Plan Efficiency | How well the agent built and followed an effective plan |

| Tool Coverage | Whether the tools used provided the coverage needed to complete the goal |

| Tool Abuse | Whether the agent called tools it did not need |

| Tool Completeness | Whether each tool response fully satisfied its intended purpose |

| Instruction Following | Whether the agent followed the instructions given to it |

| Reasoning Integrity | Whether the agent's reasoning chain was sound and consistent |

Updated about 2 months ago