Manually Annotate Your Data

How to use Deepchecks' property scores and automatic annotations to annotate your data faster and more consistently - directly in the UI or via SDK.

Manual annotations feed the Deepchecks Evaluator - the more interactions you label, the more accurate automatic annotation becomes. Deepchecks makes manual annotation faster by surfacing property scores and automatic annotation reasoning alongside each interaction, so you're not reviewing blind.

Annotating in the UI

Here's a practical workflow for annotating a version directly in Deepchecks:

-

Upload the version you want to annotate. If you have previously annotated versions with similar interactions (e.g., the same evaluation set on an earlier version), upload those too - the similarity mechanism will carry annotations forward.

-

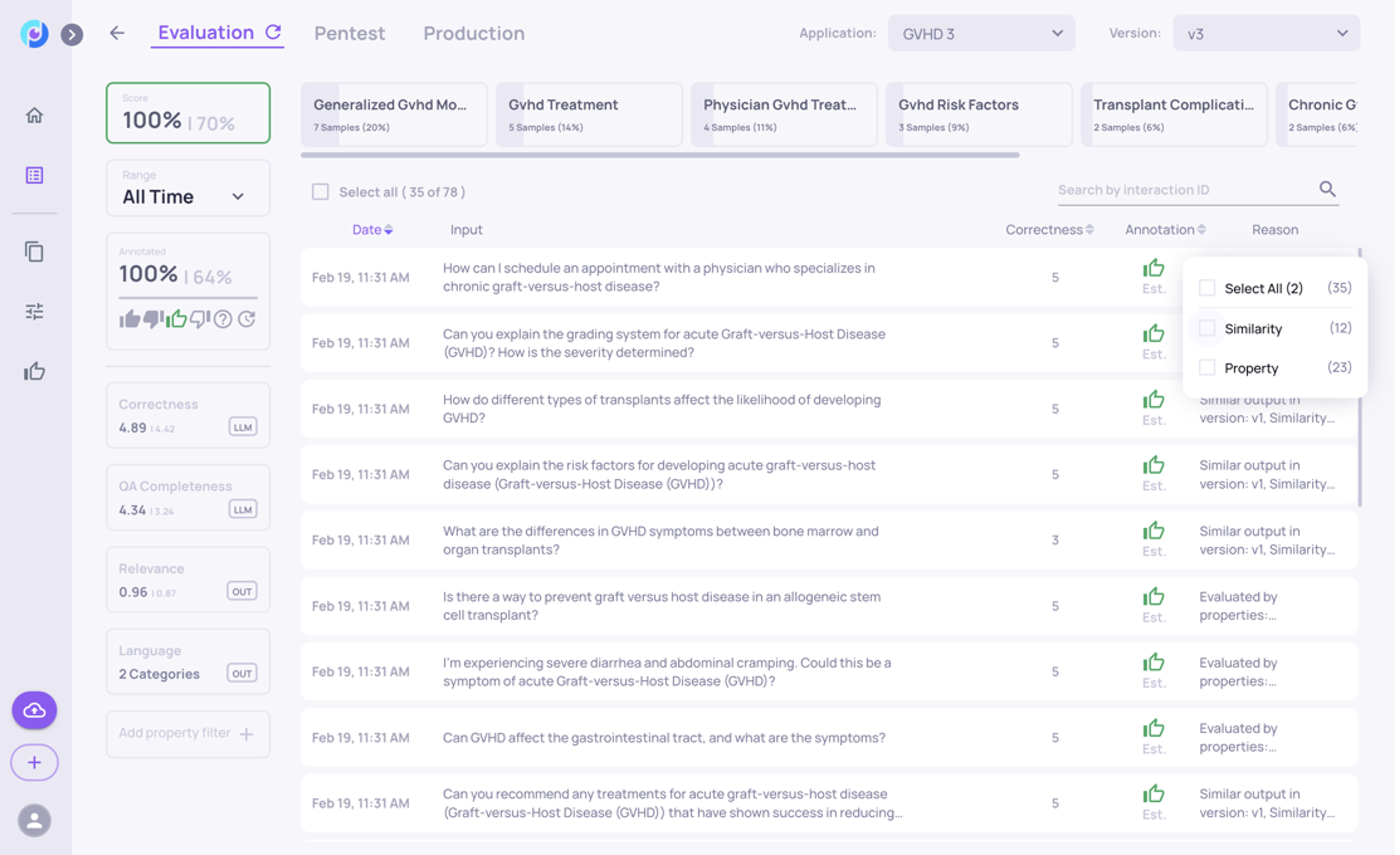

Go to the Interactions screen and select the version.

-

Start with the interactions estimated as Good. Filter by estimated good (thumbs-up) - these are the easiest to confirm and let you build a feel for what "good" looks like in your application.

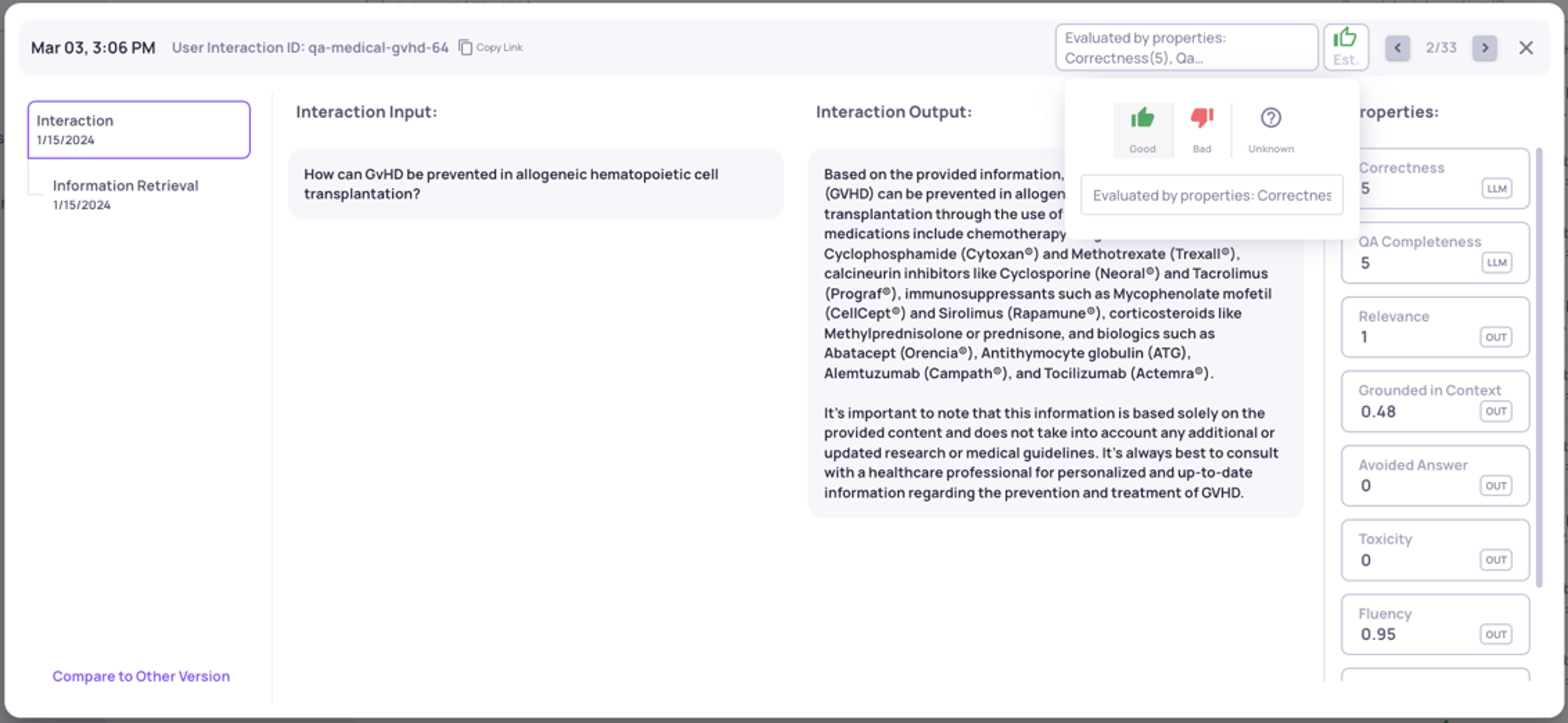

- Click any interaction to open the detail view. You'll see the input, output, property scores, and the automatic annotation with its reasoning.

-

Click the annotation symbol at the top to set it as Good, Bad, or Unknown. The property scores and automatic reasoning help you make the call quickly - you're confirming or overriding, not reviewing from scratch.

-

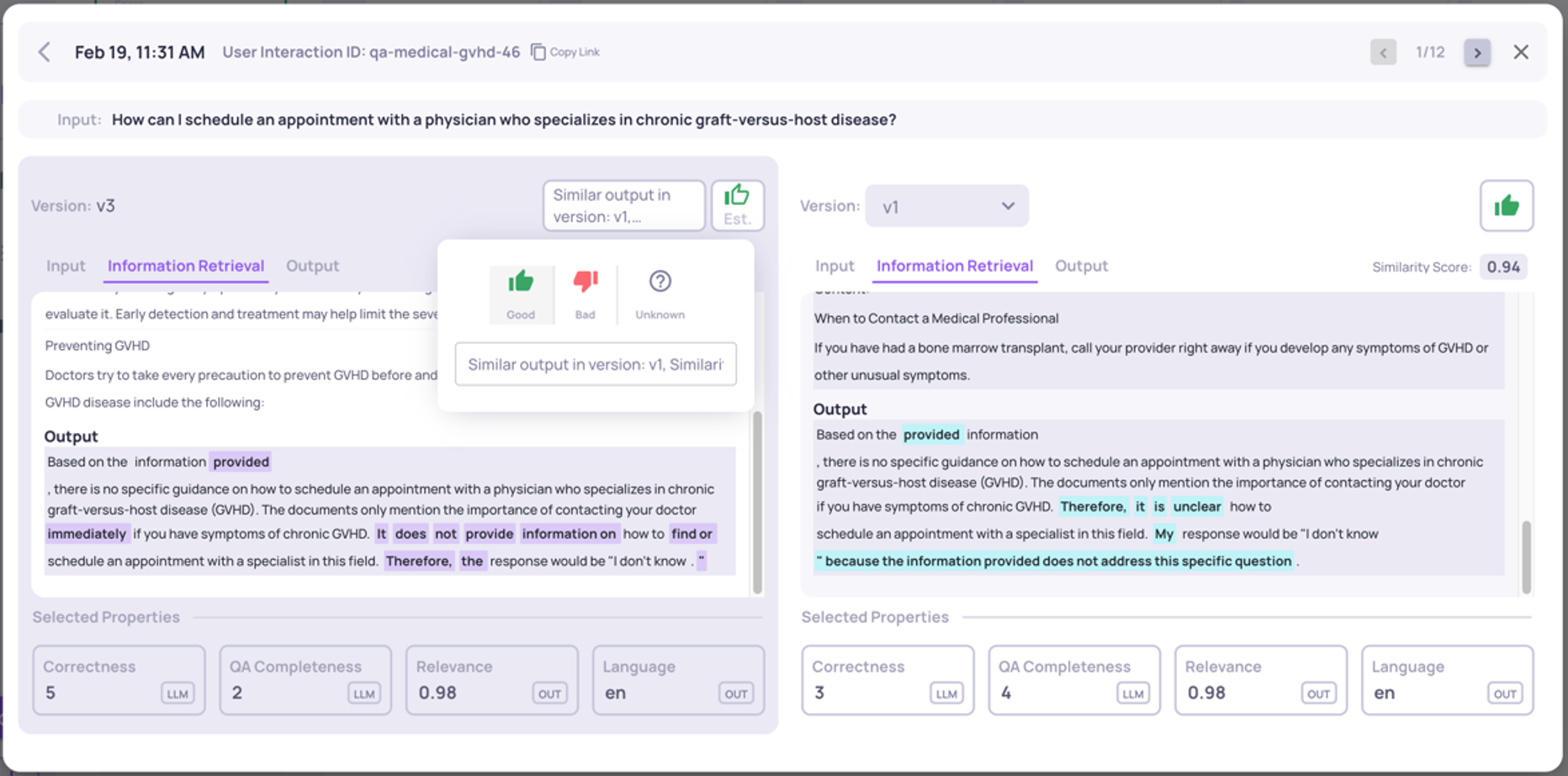

Use the comparison view to review the current interaction alongside the same input in other versions. If a similar interaction was annotated in a previous version, you'll see the similarity score and output diff - making it easy to confirm the annotation carries over.

-

Use the arrows at the top to move through interactions without returning to the list.

-

Once you've finished with estimated-good interactions, repeat for estimated-bad, then unknown. This order is recommended because you'll be reviewing similar failure patterns in sequence.

Annotating via SDK or external tools

If you prefer to annotate in an external platform, use get_data to download interactions with their property scores and estimated annotations, annotate externally, then upload annotations back via the SDK.

from deepchecks_llm_client.client import DeepchecksLLMClient

from deepchecks_llm_client.data_types import EnvType, AnnotationType

dc_client = DeepchecksLLMClient(api_token="YOUR_API_KEY")

# Upload a manual annotation for a specific interaction

dc_client.log_interaction(

app_name="my-app",

version_name="v1",

env_type=EnvType.EVAL,

interaction=LogInteraction(

user_interaction_id="interaction-123",

input="...",

output="...",

annotation=AnnotationType.BAD # or GOOD, UNKNOWN

)

)Manual annotations always override estimated ones and are used in session annotation calculations.

What's next

- Manual Annotations - conceptual overview of how manual annotations work and their role in the pipeline

- Root Cause Analysis - once you've annotated a set, use RCA to investigate patterns in the Bad interactions

- Export Data for Offline Analysis - download annotated data for fine-tuning or external analysis

Updated 22 days ago