Version Comparison

Compare versions of your LLM application side by side - from high-level score overviews to granular interaction-by-interaction differences.

Compare prompt variants, model swaps, or configuration changes to find the best trade-off between quality and cost. Deepchecks provides comparison at three levels: high-level metrics, CSV export for offline analysis, and granular interaction-by-interaction drill-down.

Comparing apples to apples: To fairly compare versions, run them on the same input data and use the user_interaction_id to match interactions across versions. See Dataset Management for how to build and maintain a consistent evaluation set.

High-level comparison

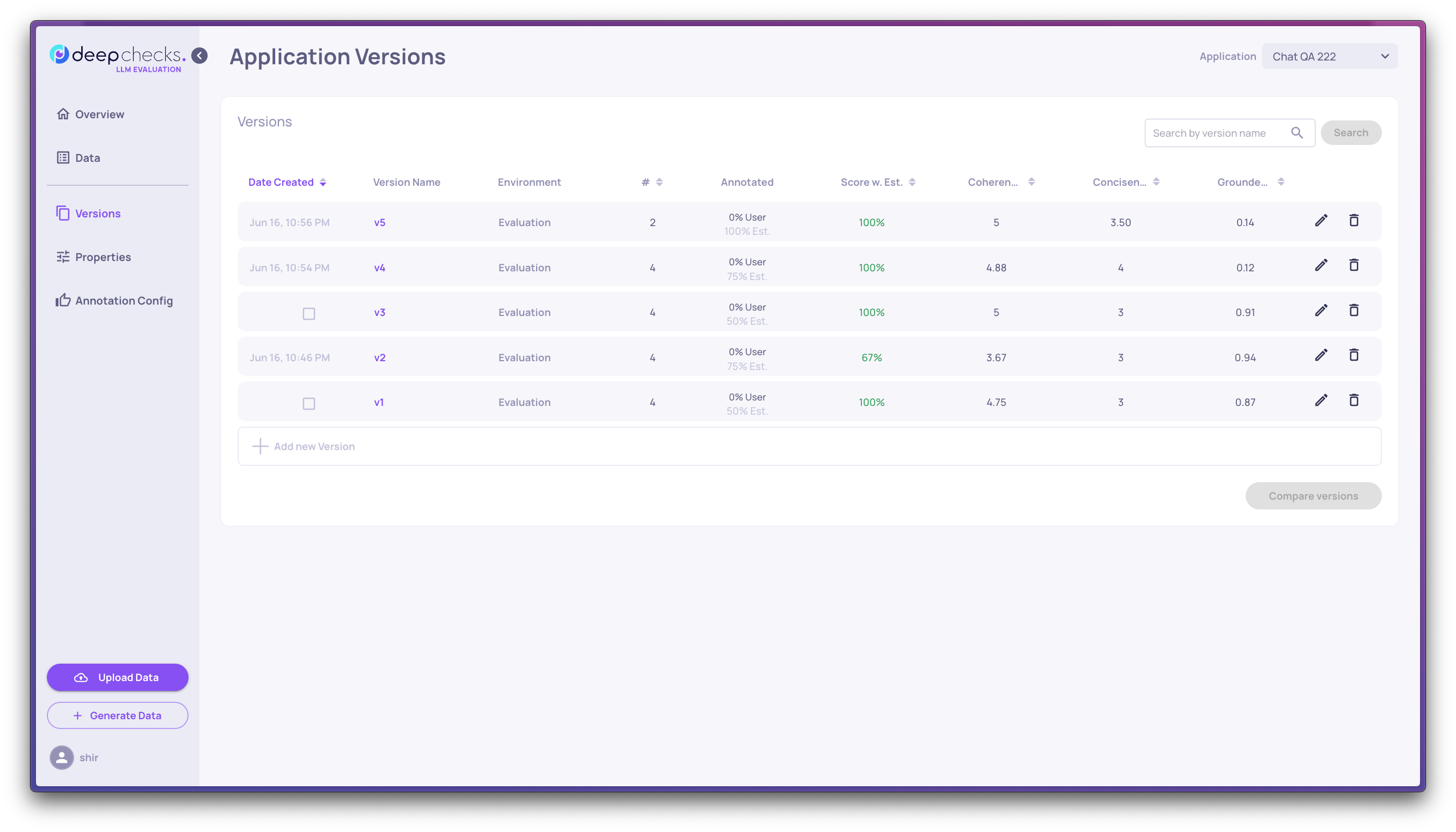

The Versions screen shows all versions of an application with their key metrics. Sort by "Score w. Est" (the average automatic annotation score) to quickly rank versions, then examine property averages, latency, and token usage for the top candidates.

This comparison is always done at the interaction type level, so you can see how each version performs on Q&A, Agent, or any other type independently.

To focus on specific versions, click the up-arrow icon next to any version to "stick" it to the top of the list. This is useful when narrowing down to a few finalists.

CSV export

After the high-level review, you can export results to a CSV file for deeper analysis in external tools. The export includes all selected versions side by side with:

- Overall performance assessment

- System metrics (latency, token usage)

- Score breakdowns by main properties

How to use it:

- Go to the Versions screen

- Select the versions you want to compare

- Click Export to CSV

Note: The CSV focuses on the numerical properties highlighted in the Overview - not every property on the platform. Unlike the granular comparison, the CSV export does not enforce that all versions come from the same dataset, so keep this in mind when interpreting results.

Granular comparison

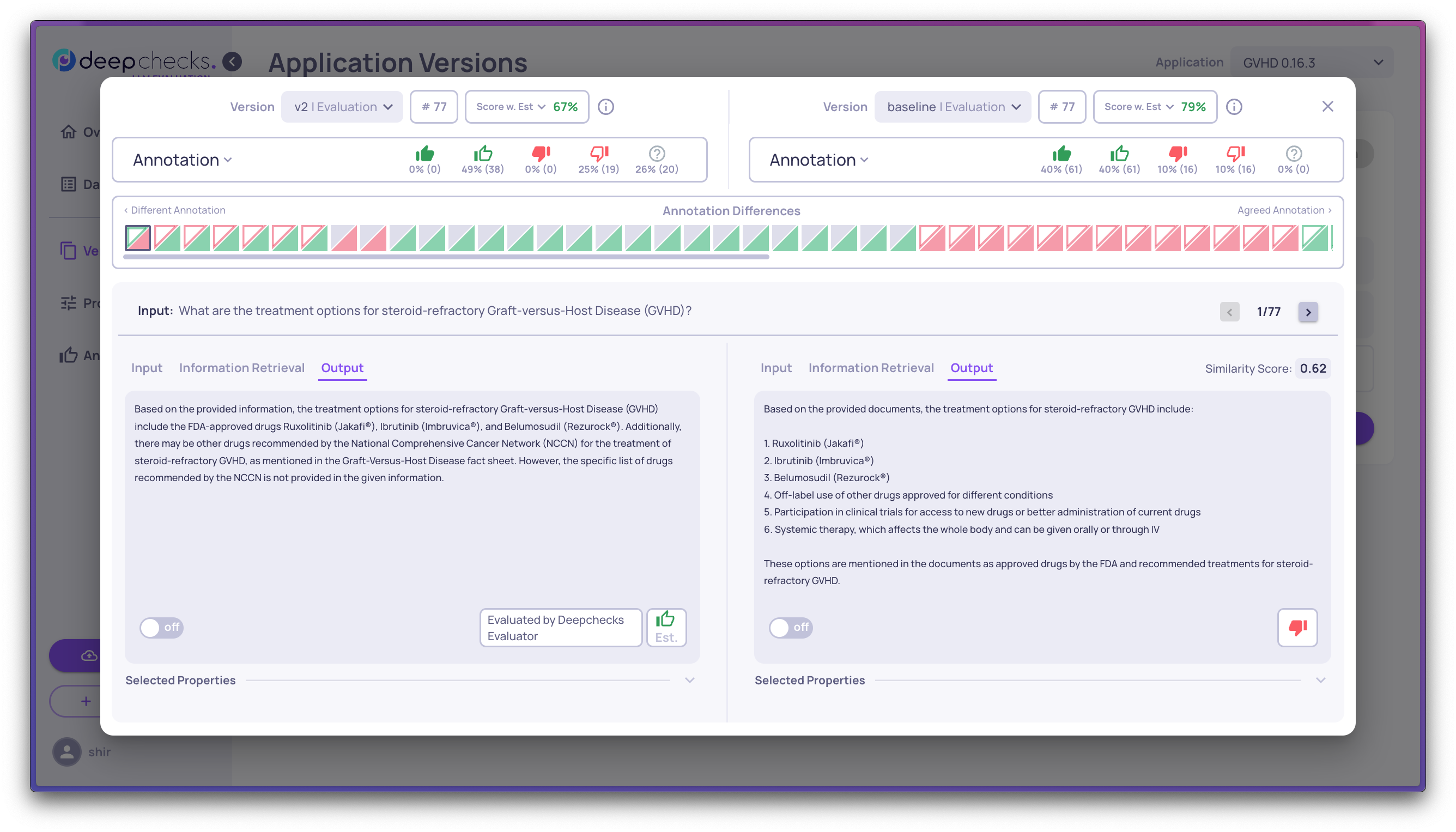

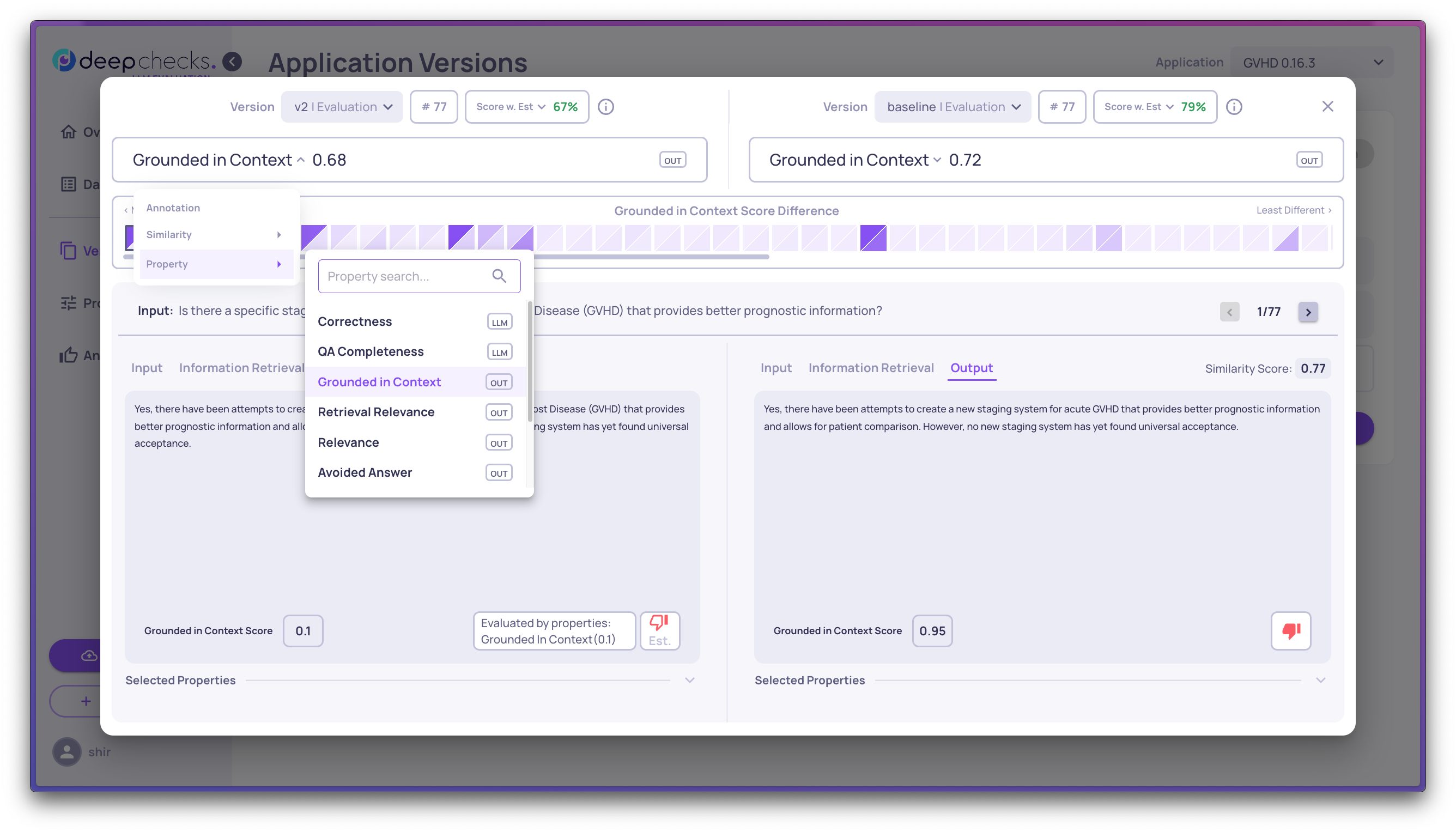

Selecting exactly two versions opens a detailed side-by-side view. This drills down to the specific differences between them - highlighting interactions whose outputs are most dissimilar or that received different annotations.

You can choose what to compare: where they differ on a specific property, where annotations disagree, or where latency and token usage diverge.

Identifying the better version

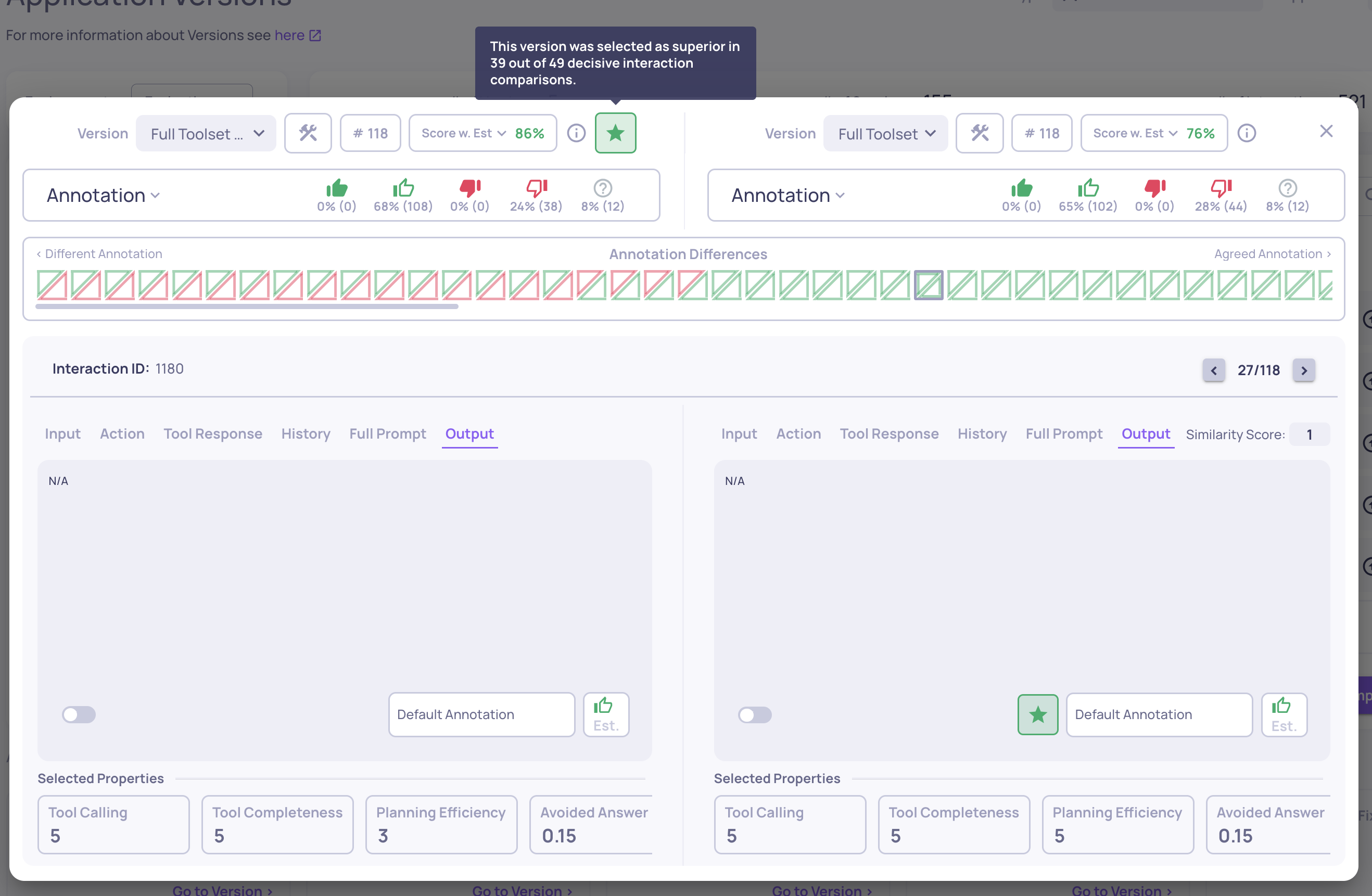

The comparison view highlights which version and individual interactions perform better based on numerical property analysis:

- Interaction-level star - Each interaction pair is evaluated based on the average of the first three pinned numerical properties. The interaction with the higher average receives a star. If equal, both receive a star.

- Version-level star - A star appears next to the version that contains a majority of "better" interactions, giving a quick visual indicator of the overall winner.

- Hover for details - Hovering over a star at either level shows a tooltip explaining the rationale.

Updated 23 days ago