0.24.0 Release Notes

by Shir ChorevThis version includes support for expected outputs (comparison to ground truth) and customization of interaction types for evaluation, along with more features, stability and performance improvements, that are part of our 0.24.0 release.

Deepchecks LLM Evaluation 0.24.0 Release

- ✅ Support for Expected Outputs for Evaluation Data Comparison

- 🥗 Custom Interaction Types & Configuration

What’s New and Improved?

-

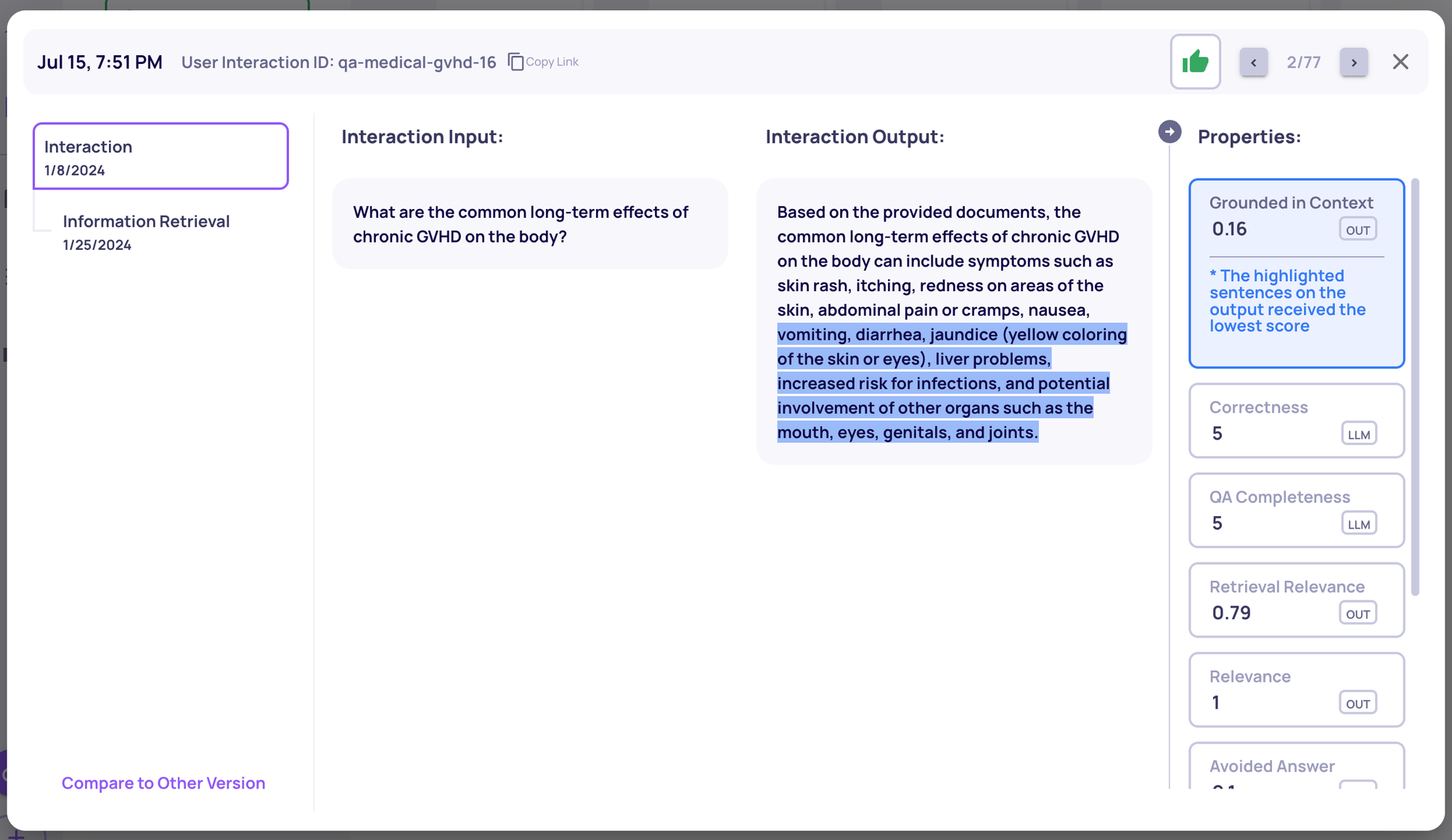

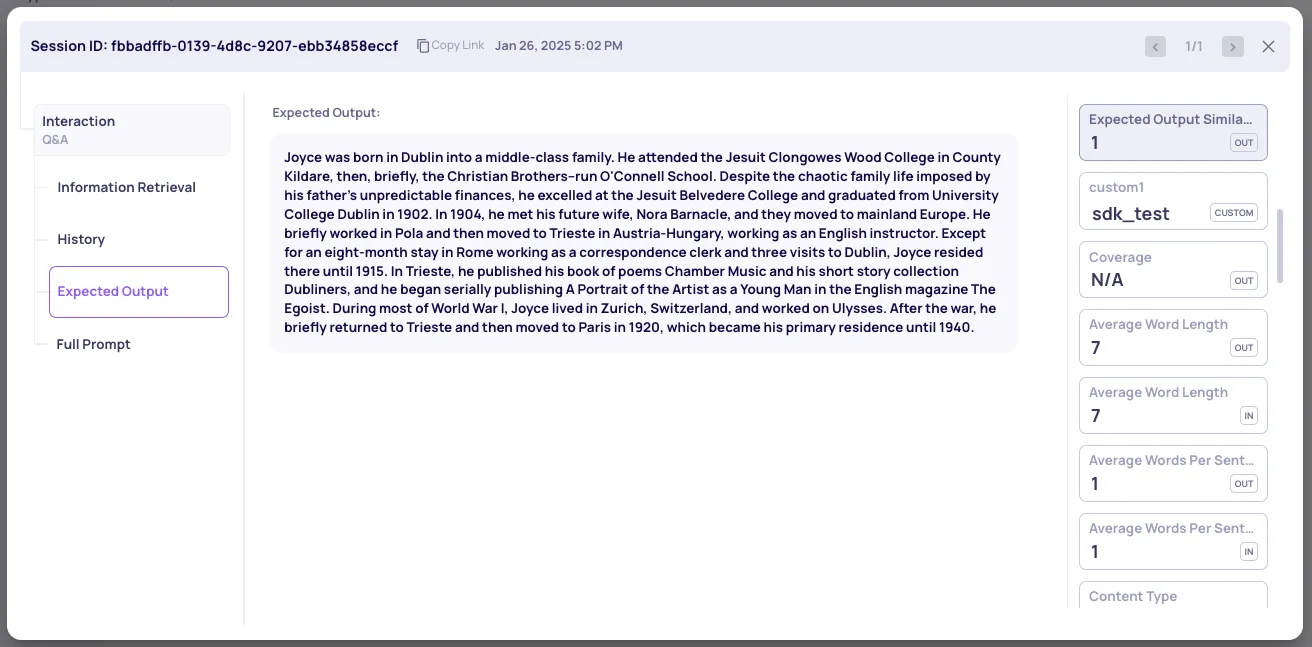

Support for Expected Outputs for Evaluation Data Comparison

-

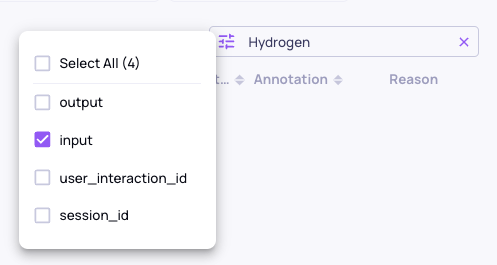

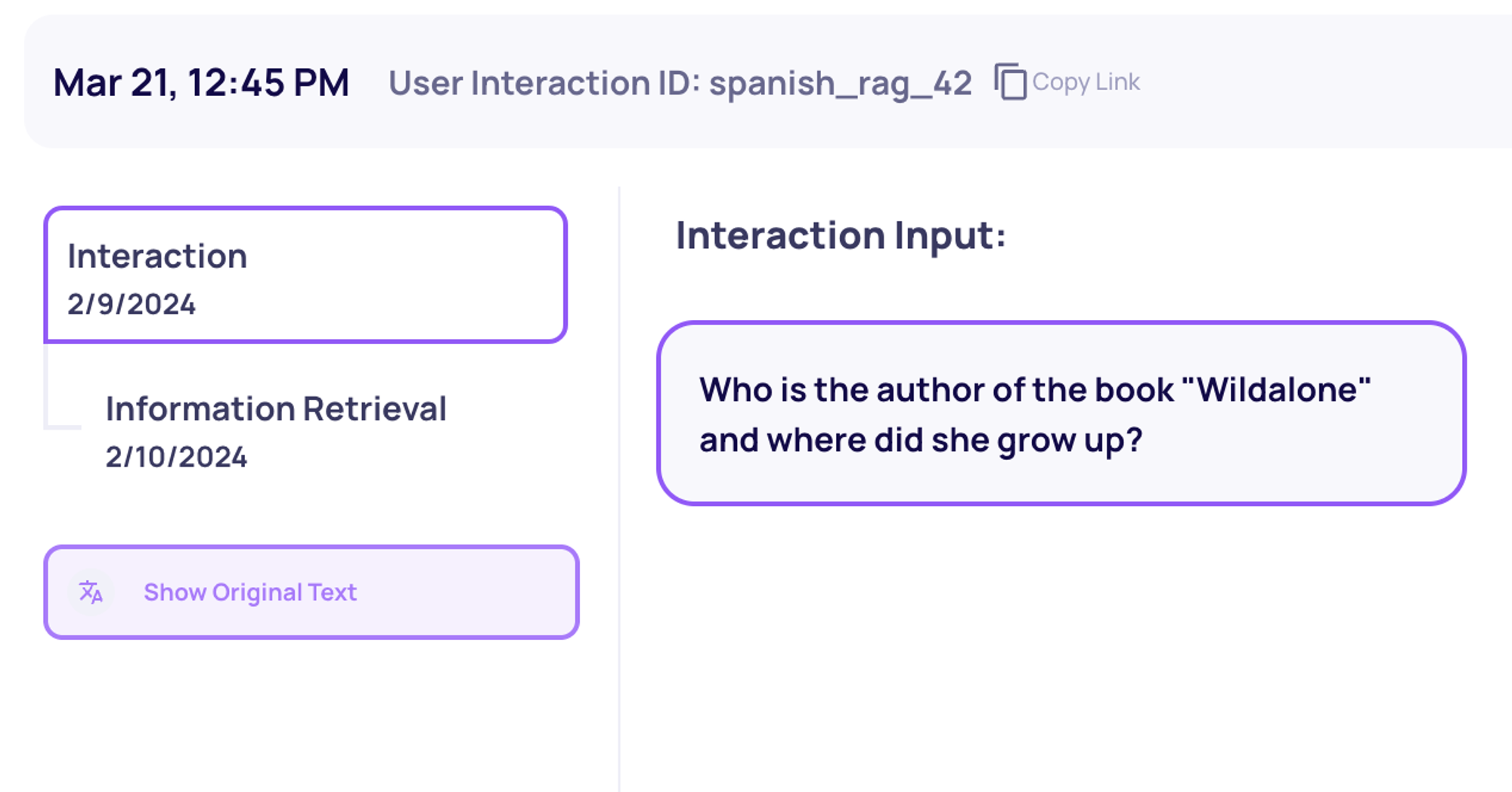

You can now send an

expected_outputfield, allowing you to log your ground truths alongside your outputs -

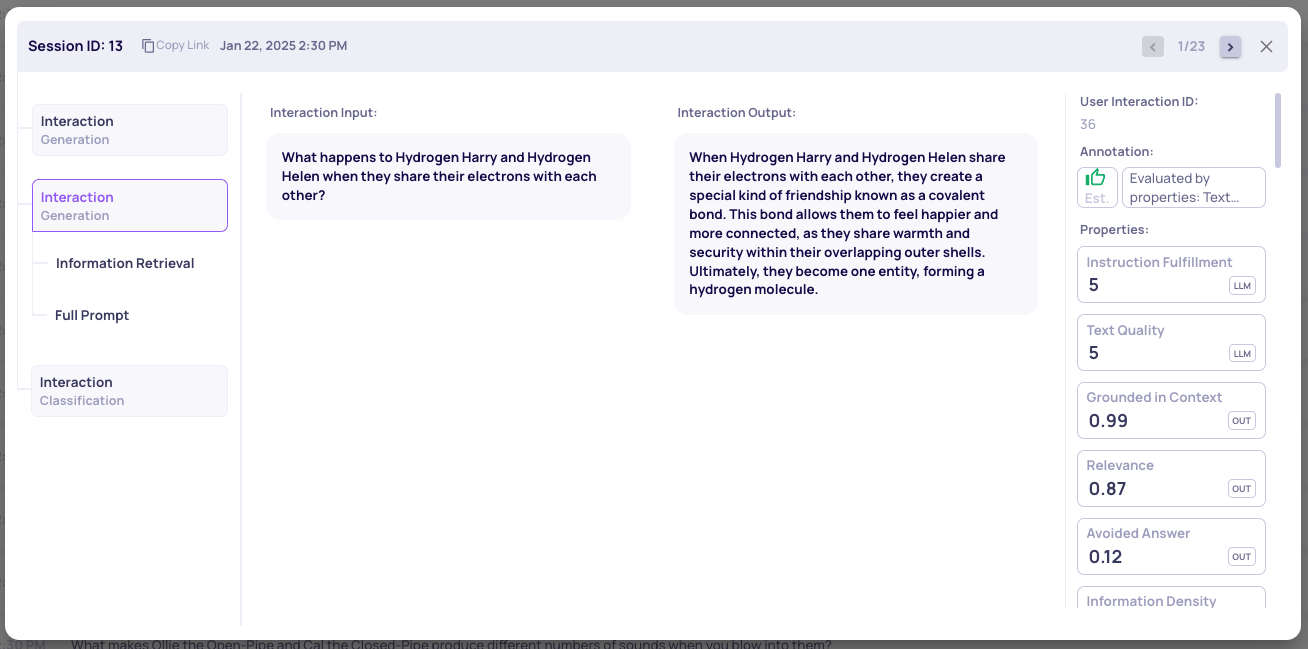

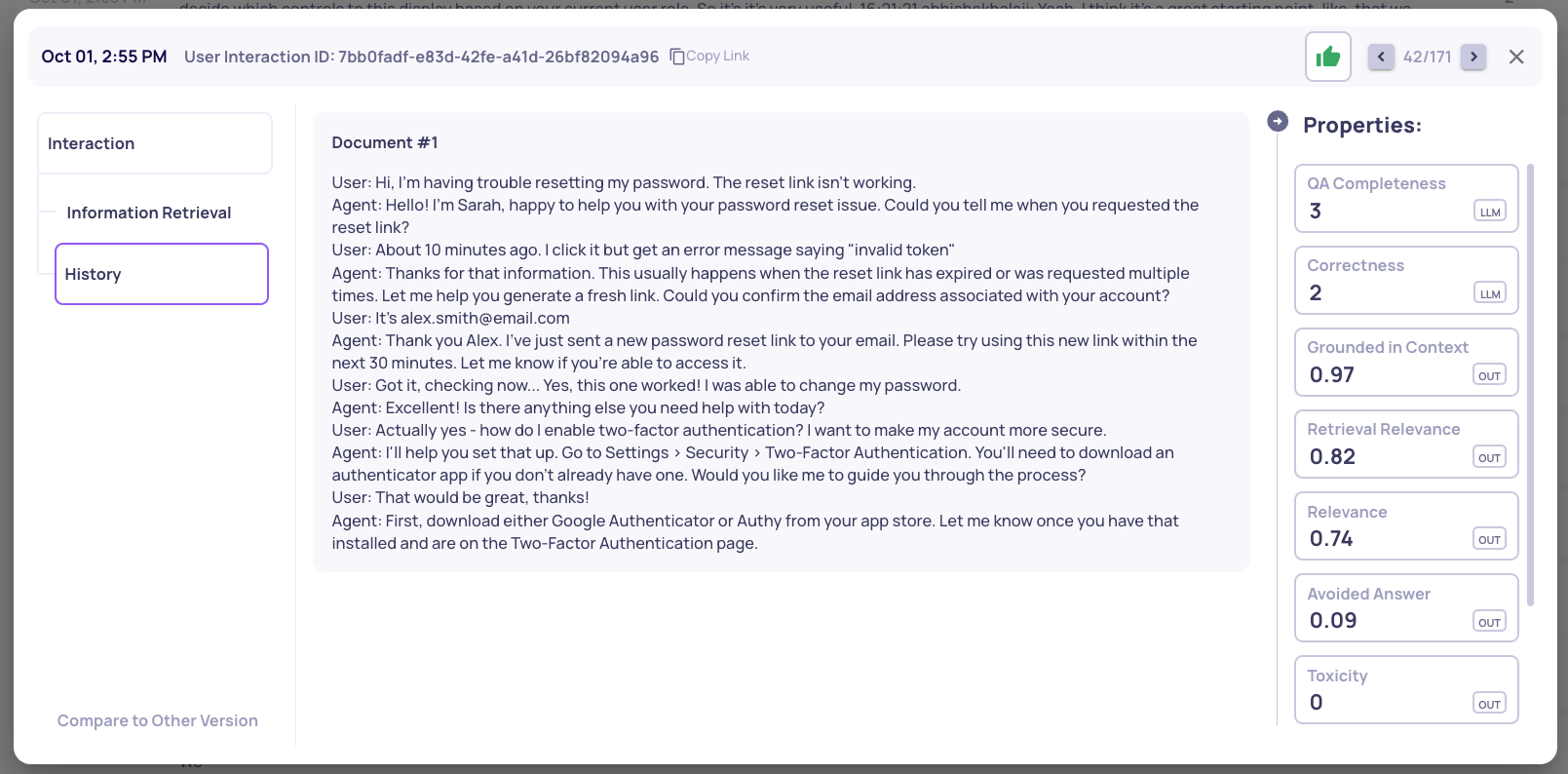

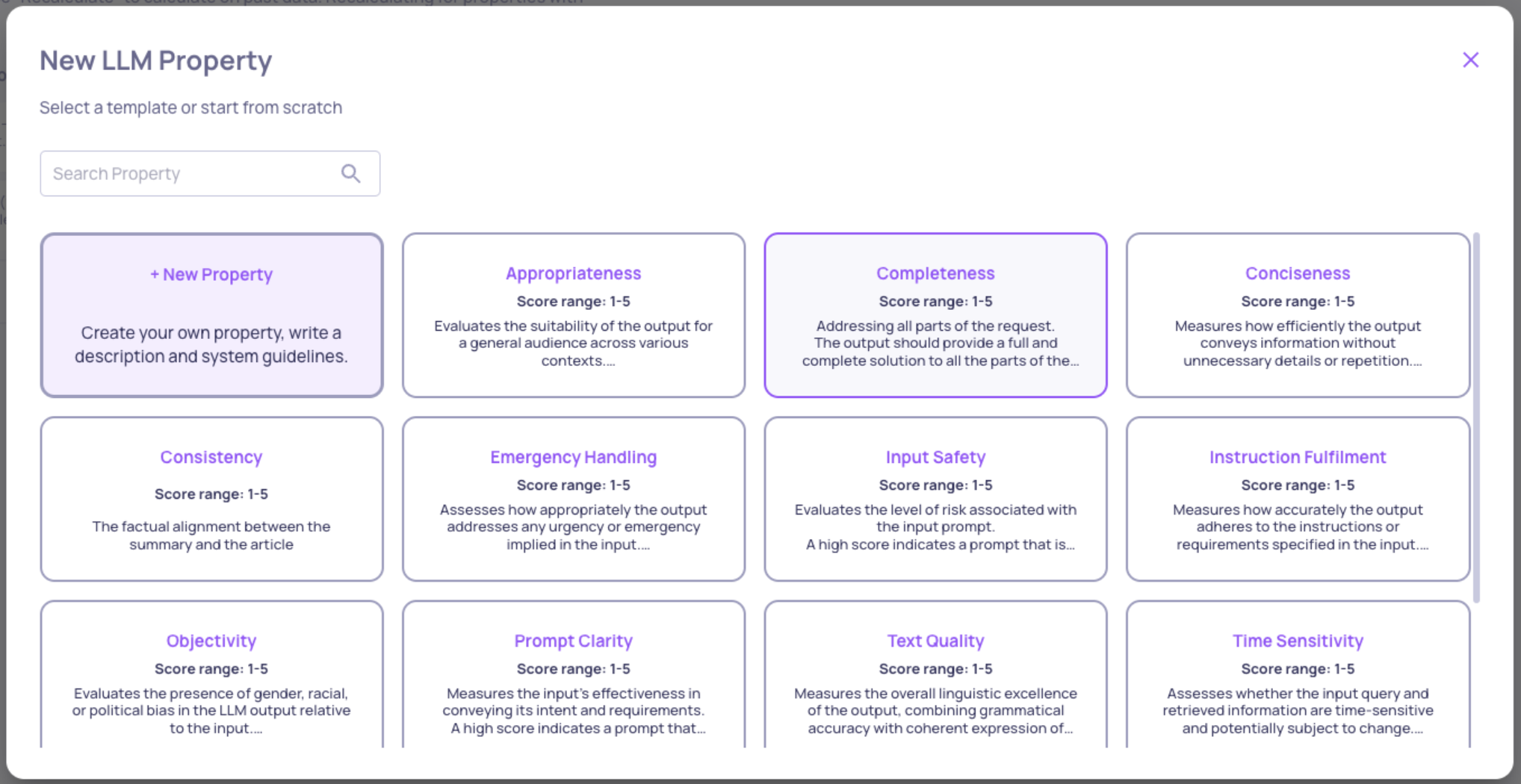

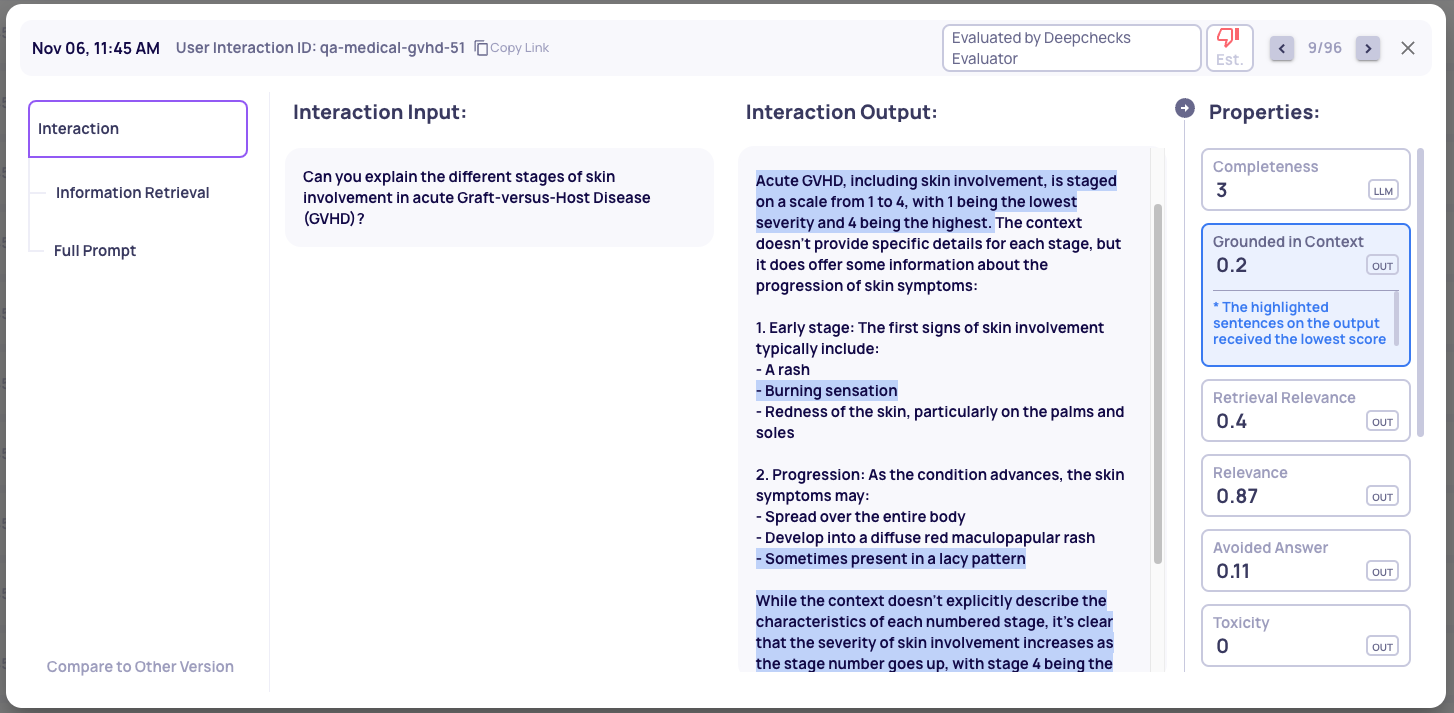

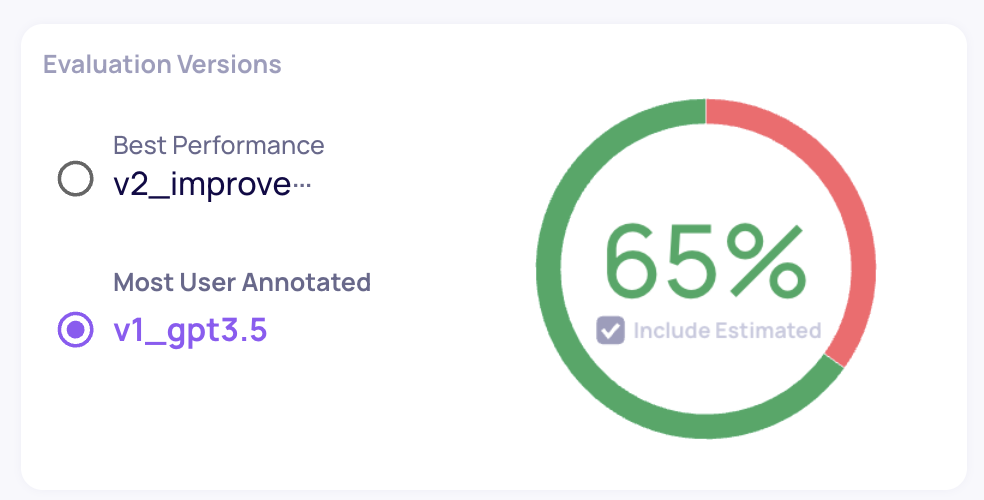

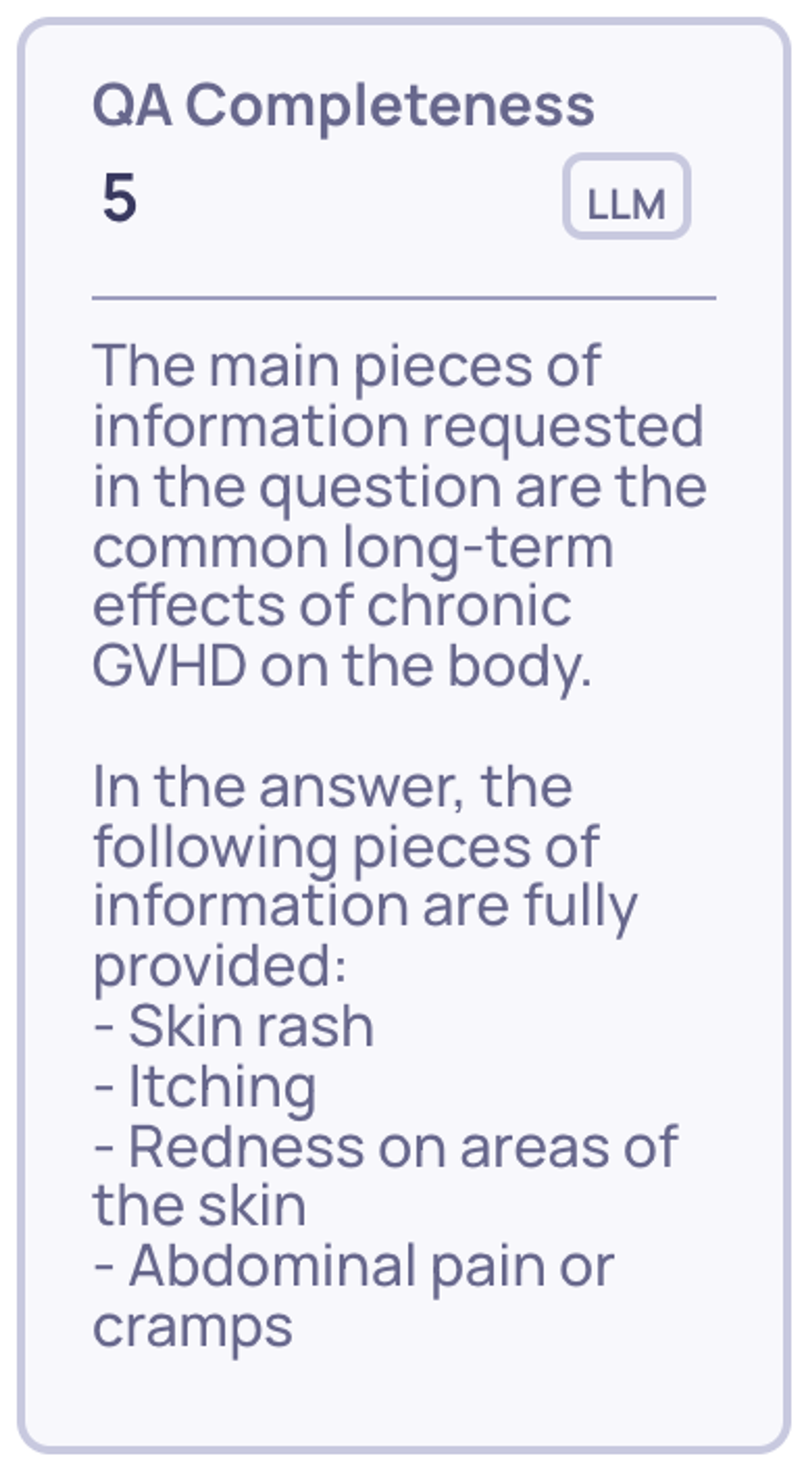

Expected Output Similarity Property - Deepchecks built in property for assessing the accuracy of your output in comparison to the ground truth. 5 is highly accurate and 1 is the opposite. This is used for identifying wrong outputs in the auto-annotation configuration. Read more about this property here.

-

-

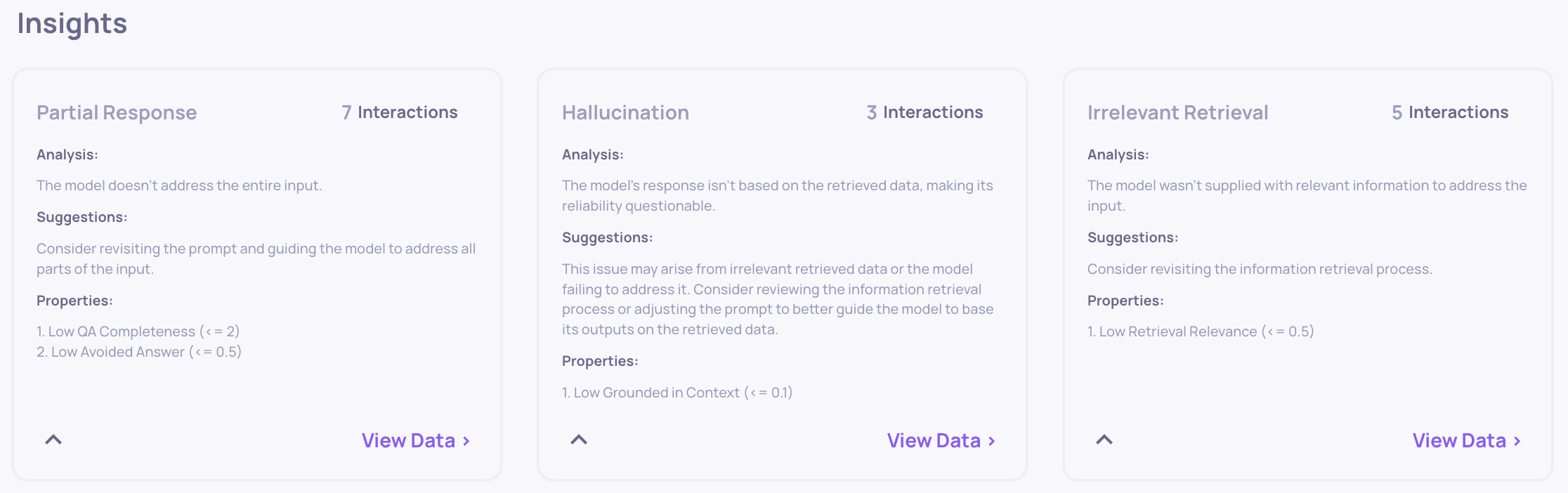

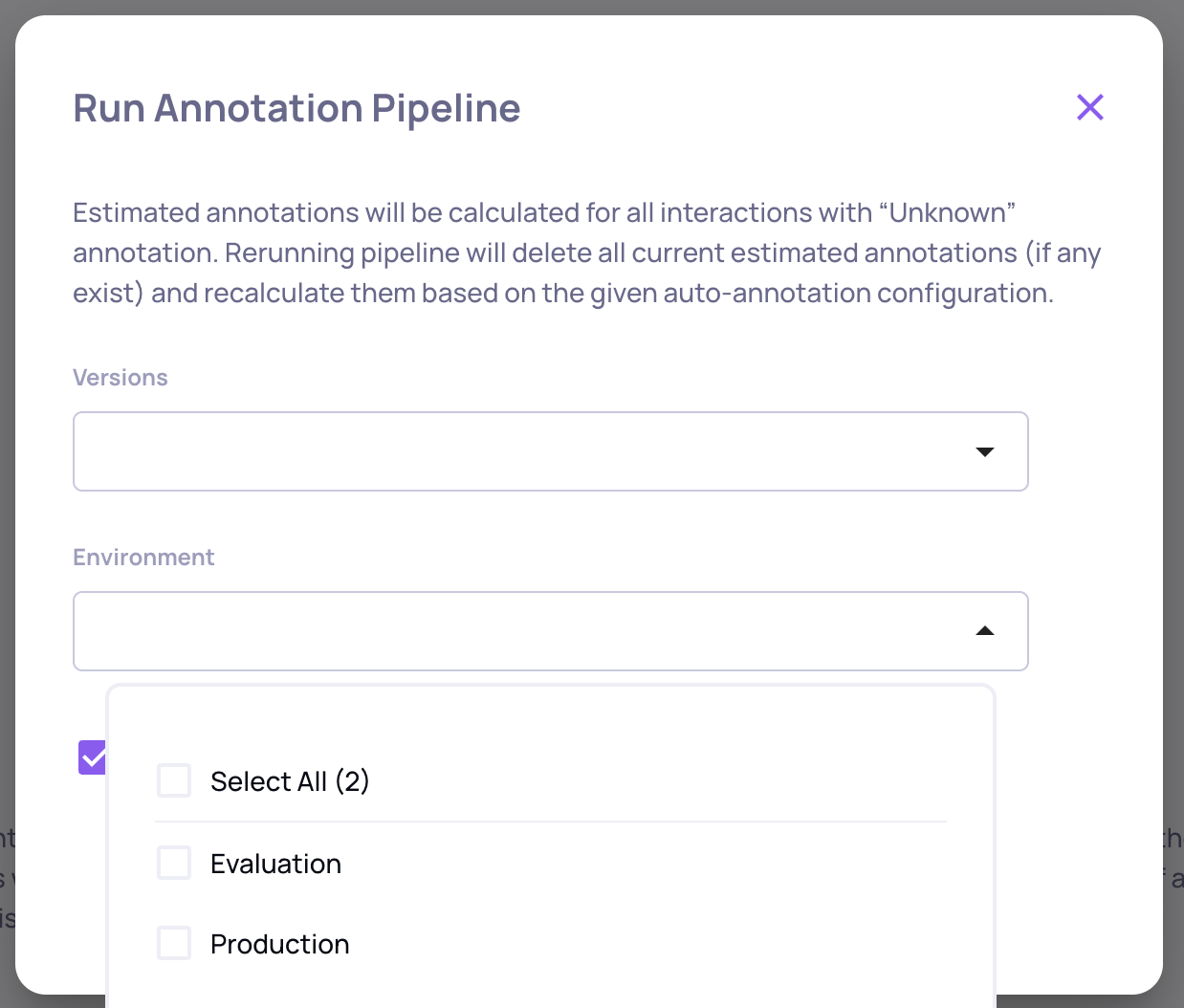

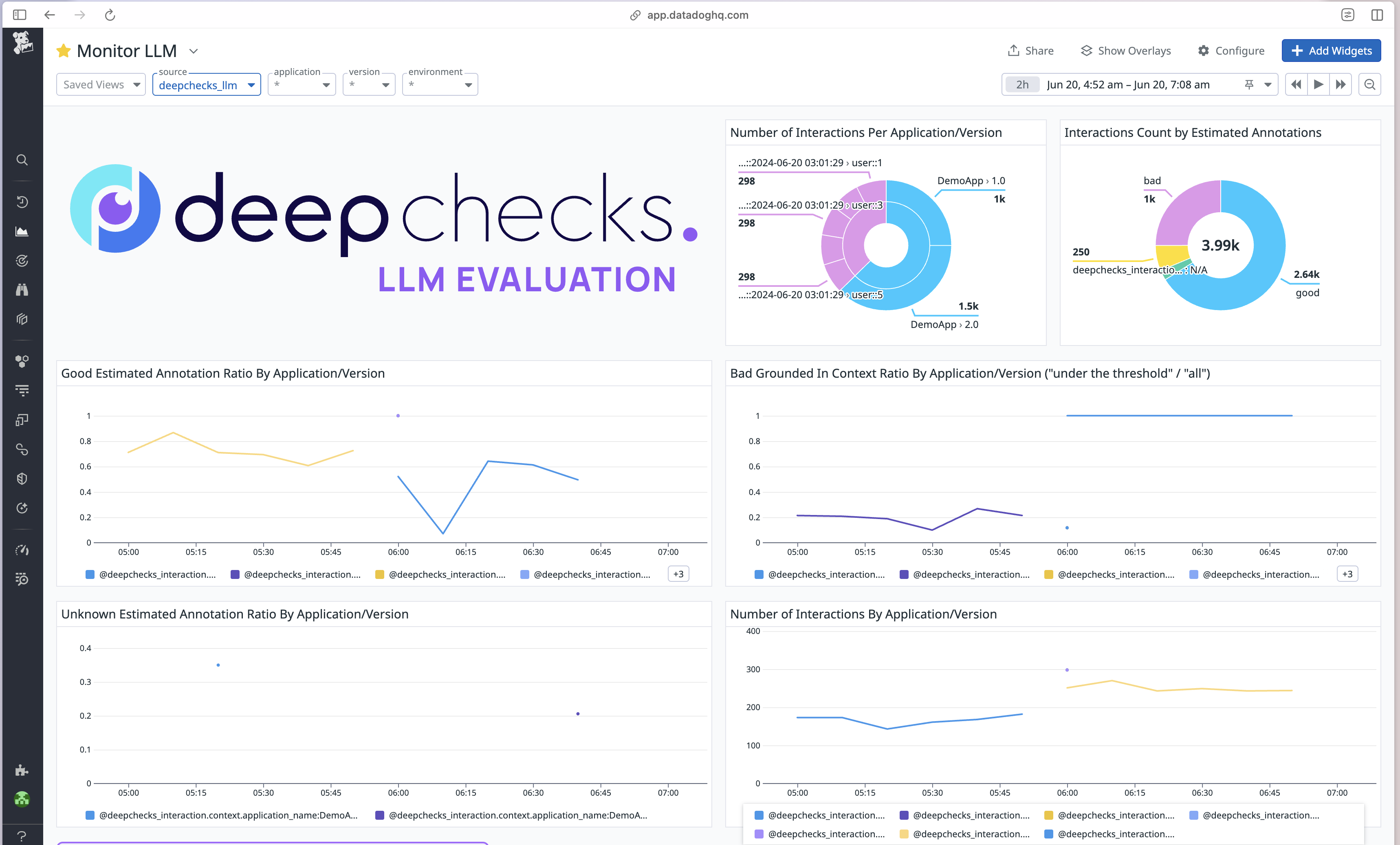

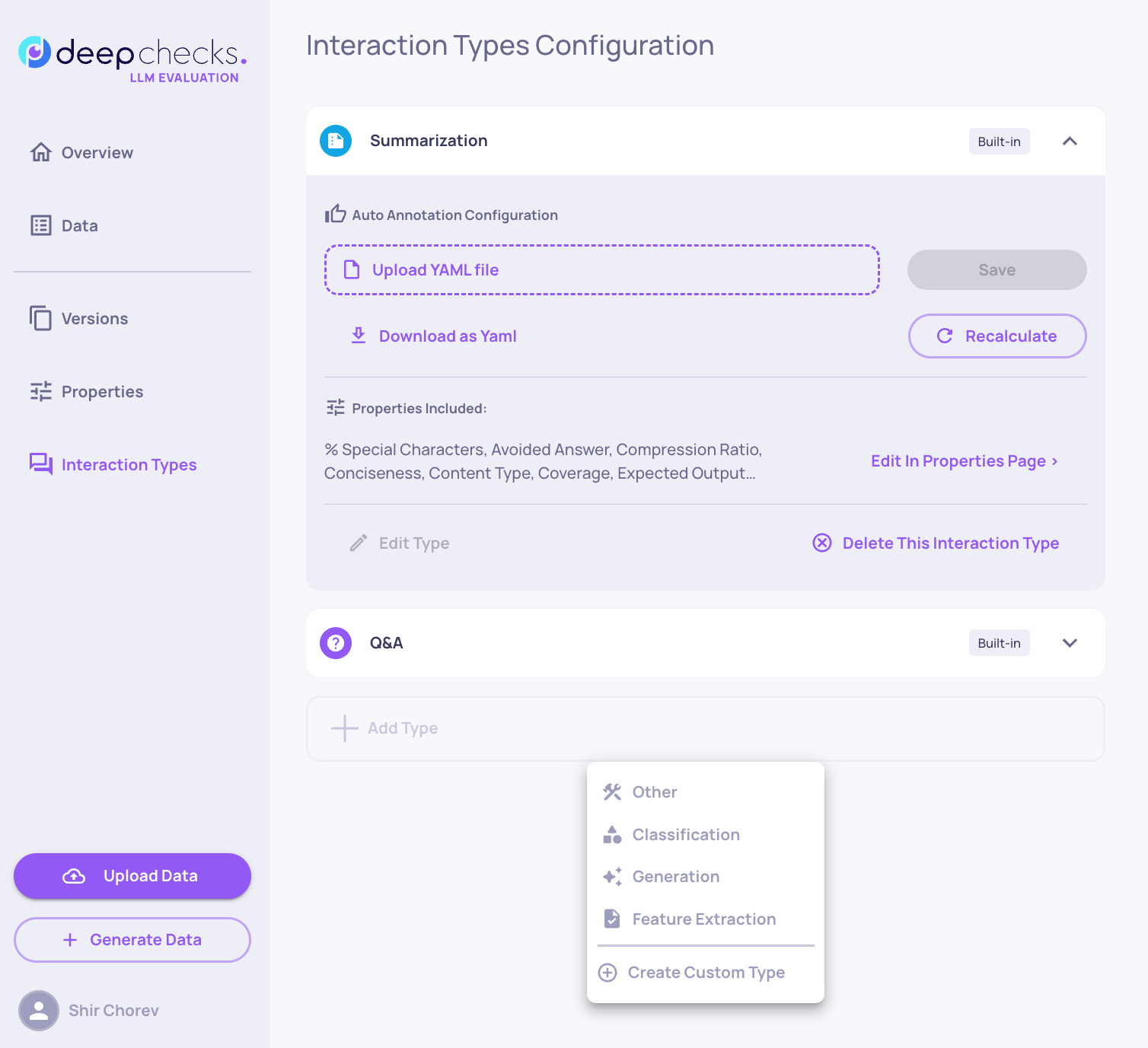

Custom Interaction Types & Configuration

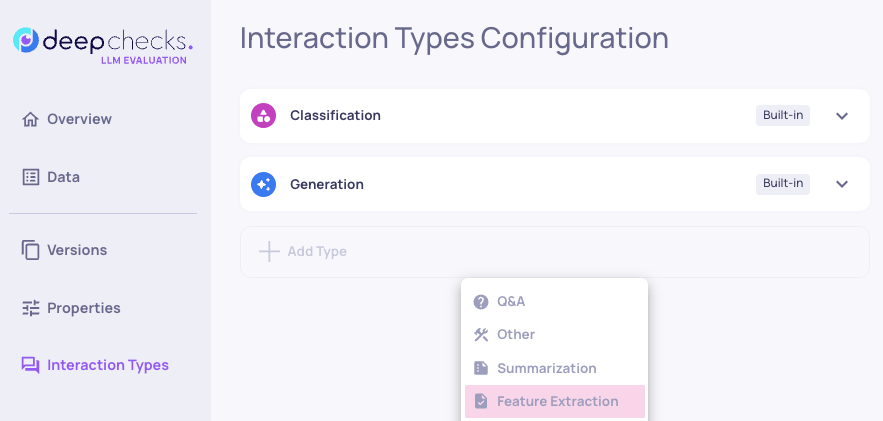

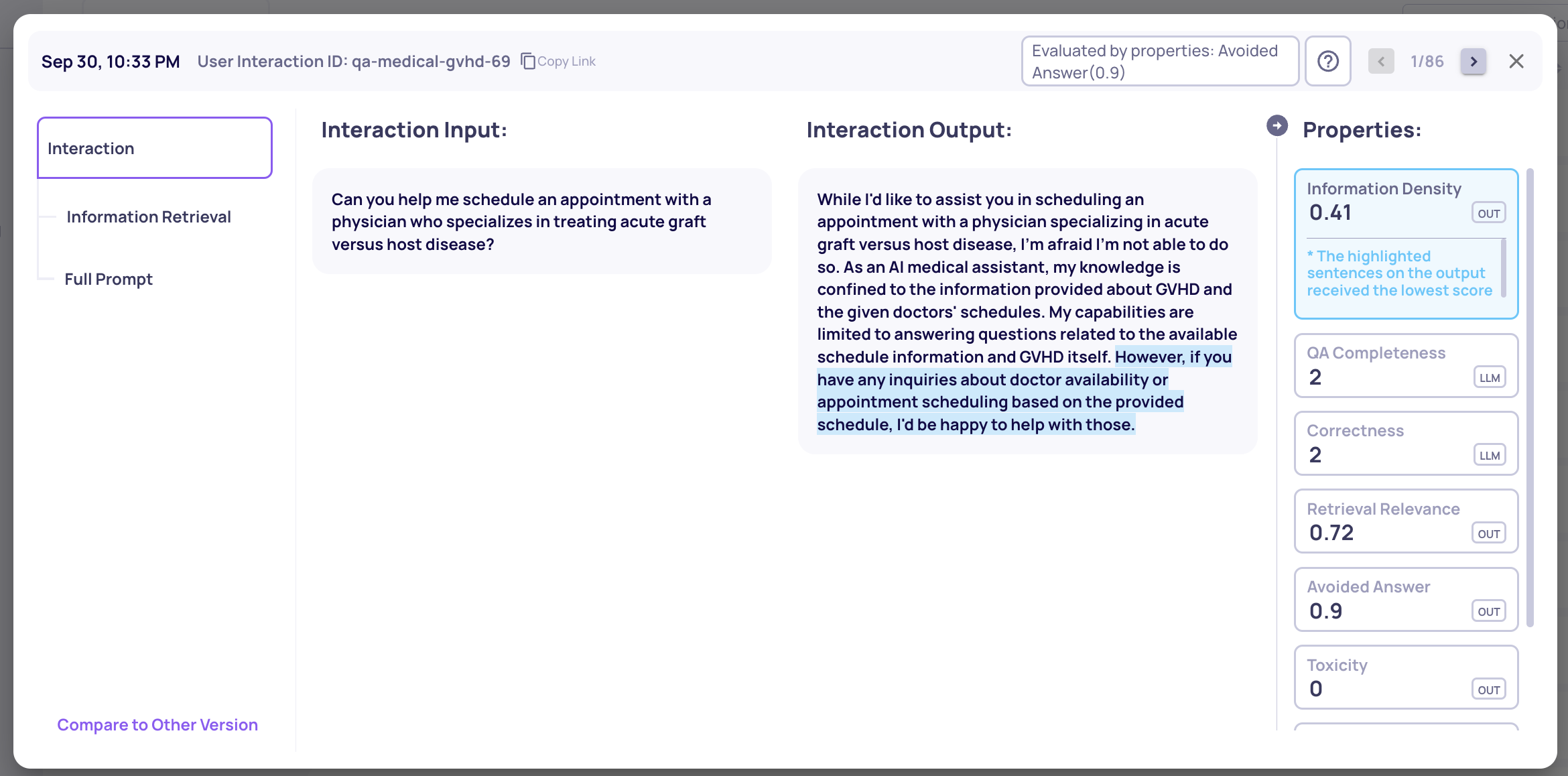

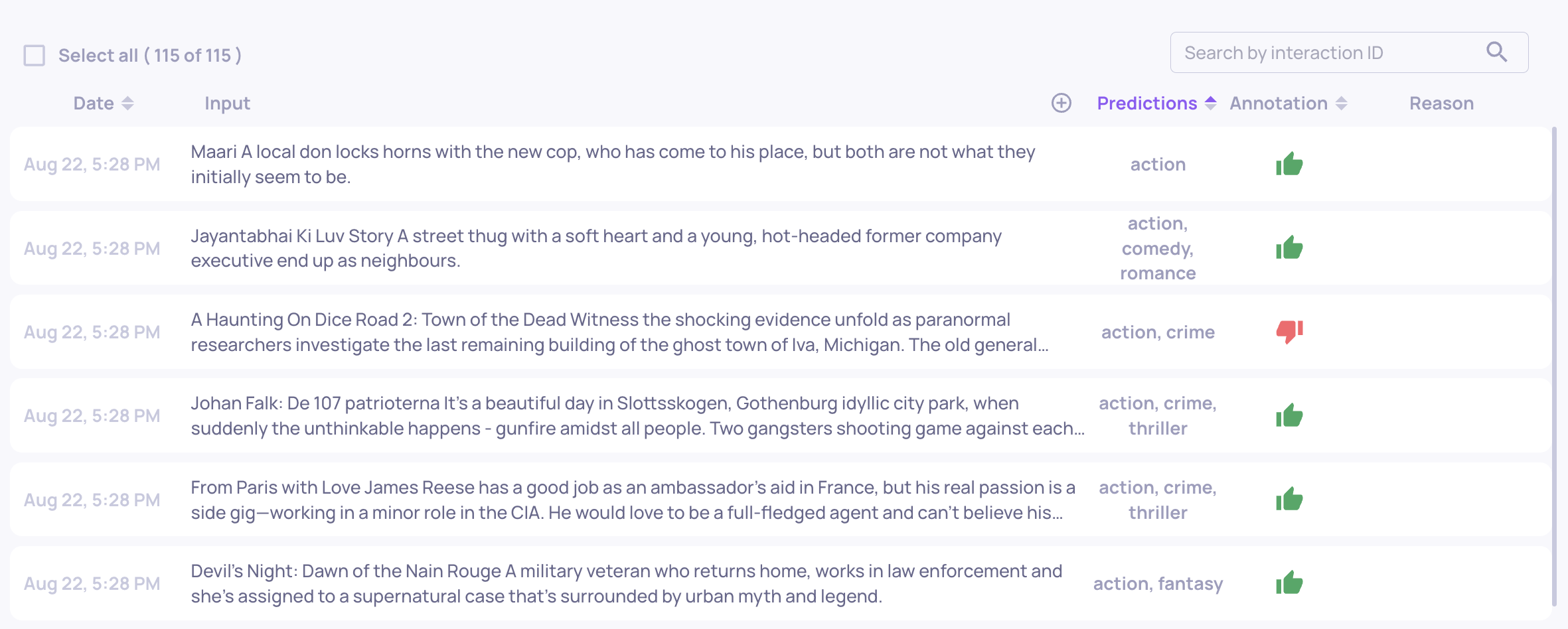

- Update to the Interaction Types screen, including the auto-annotation configuration which is now available here.

- You can now define your custom interaction types alongside the Deepchecks preset ones. Choose an icon, name, the desired properties and your auto-annotation configuration and you’re ready to go.

- When defining a new interaction type you can either start from scratch, or use as a template any of the interaction types that you already have defined in your current app.

Note: SDK Breaking ChangesAll calls to log_batch_interactions, are now done using the LogInteraction object, which is a renaming of the previous LogInteractionType object.

Previosly:

dc_client.log_batch_interactions( app_name="app", version_name="version", env_type=EnvType.EVAL, interactions=LogInteractionType( input="input", output="output", user_interaction_id="id", interaction_type="Q&A", session_id="session-id" ) )now:

dc_client.log_batch_interactions( app_name="app", version_name="version", env_type=EnvType.EVAL, interactions=LogInteraction( input="input", output="output", user_interaction_id="id", interaction_type="Q&A", session_id="session-id" ) )